I think this article does a good job of asking the question “what are we really measuring when we talk about LLM accuracy?” If you judge an LLM by its: hallucinations, ability analyze images, ability to critically analyze text, etc. you’re going to see low scores for all LLMs.

The only metric an LLM should excel at is “did it generate human readable and contextually relevant text?” I think we’ve all forgotten the humble origins of “AI” chat bots. They often struggled to generate anything more than a few sentences of relevant text. They often made syntactical errors. Modern LLMs solved these issues quite well. They can produce long form content which is coherent and syntactically error free.

However the content makes no guarantees to be accurate or critically meaningful. Whilst it is often critically meaningful, it is certainly capable of half-assed answers that dodge difficult questions. LLMs are approaching 95% “accuracy” if you think of them as good human text fakers. They are pretty impressive at that. But people keep expecting them to do their math homework, analyze contracts, and generate perfectly valid content. They just aren’t even built to do that. We work really hard just to keep them from hallucinating as much as they do.

I think the desperation to see these things essentially become indistinguishable from humans is causing us to lose sight of the real progress that’s been made. We’re probably going to hit a wall with this method. But this breakthrough has made AI a viable technology for a lot of jobs. So it’s definitely a breakthrough. I just think either I finitely larger models (of which we can’t seem to generate the data for) or new models will be required to leap to the next level.

But people keep expecting them to do their math homework, analyze contracts, and generate perfectly valid content

People expect that because that’s how they are marketed. The problem is that there’s an uncontrolled hype going on with AI these days. To the point of a financial bubble, with companies investing a lot of time and money now, based on the promise that AI will save them time and money in the future. AI has become a cult. The author of the article does a good job in setting the right expectations.

I just told an LLM that 1+1=5 and from that moment on, nothing convinced it that it was wrong.

I just told chat gpt(4) that 1 plus 1 was 5 and it called me a liar

Ask it how much is 1 + 1, and then tell it that it’s wrong and that it’s actually 3. What do you get?

That is what I did

I guess ChatGPT 4 has wised up. I’m curious now. Will try it.

Edit: Yup, you’re right. It says “bro, you cray cray.” But if I tell it that it’s a recent math model, then it will say “Well, I guess in that model it’s 7, but that’s not standard.”

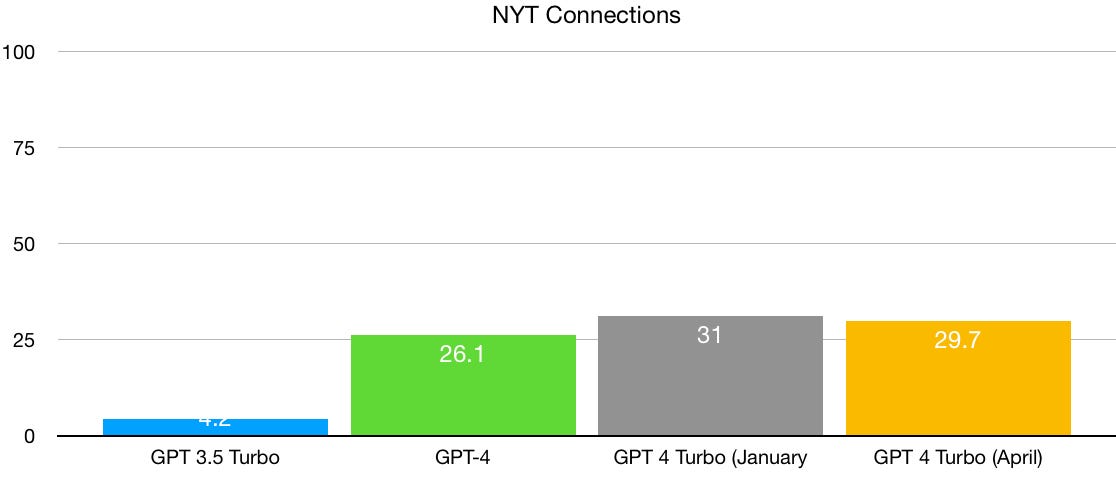

If we really have changed regimes, from rapid progress to diminishing returns, and hallucinations and stupid errors do linger, LLMs may never be ready for prime time.

…aaaaaaaaand the AI cult just canceled Gary Marcus.

In truth, we are still a long way from machines that can genuinely understand human language. […]

Indeed, we may already be running into scaling limits in deep learning, perhaps already approaching a point of diminishing returns. In the last several months, research from DeepMind and elsewhere on models even larger than GPT-3 have shown that scaling starts to falter on some measures, such as toxicity, truthfulness, reasoning, and common sense.

- Gary Marcus in Deep Learning Is Hitting a Wall published March 10th, 2022 - 4 days before GPT-4 released

I’ve rarely seen anyone so committed to being a broken clock in the hope of being right at least once a day.

Of course, given he built a career on claiming a different path was needed to get where we are today, including a failed startup in that direction, it’s a bit like the Upton Sinclair quote about not expecting someone to understand a thing their paycheck depends on them not understanding.

But I’d be wary of giving Gary Marcus much consideration.

Generally as a futurist if you bungle a prediction so badly that four days after you were talking about diminishing returns in reasoning a product comes out exceeding even ambitious expectations for reasoning capabilities in an n+1 product, you’d go back to the drawing board to figure out where your thinking went wrong and how to correct it in the future.

Not Gary though. He just doubled down on being a broken record. Surely if we didn’t hit diminishing returns then, we’ll hit them eventually, right? Just keep chugging along until one day those predictions are right…