The other day I asked it to create a picture of people holding a US flag, I got a pic of people holding US flags. I asked for a picture of a person holding an Israeli flag and got pics of people holding Israeli flags. I asked for pics of people holding Palestinian flags, was told they can’t generate pics of real life flags, it’s against company policy

Genuinely upsetting to think it is legitimate propaganda

deleted by creator

Can see easily that they are using reddit for training: “google it”

Won’t be long when AI just answer with “yes” on question with two choice.

This is why Wikipedia needs our support.

Bad news, Wikipedia is no better when it comes to economic or political articles.

The fact that ADL is on Wikipedia’s “credible sources” page is all the proof you need.

See Who’s Editing Wikipedia - Diebold, the CIA, a Campaign

Incidentally, the “WikiScanner” software that Virgil Griffin (a close friend of Aaron Swartz, incidentally) developed to chase down bulk Wiki edits has been decommissioned and the site shut down. Virgil is currently serving out a 63 month sentence for the crime of traveling to North Korea to attend a tech summit.

Read into that what you will.

deleted by creator

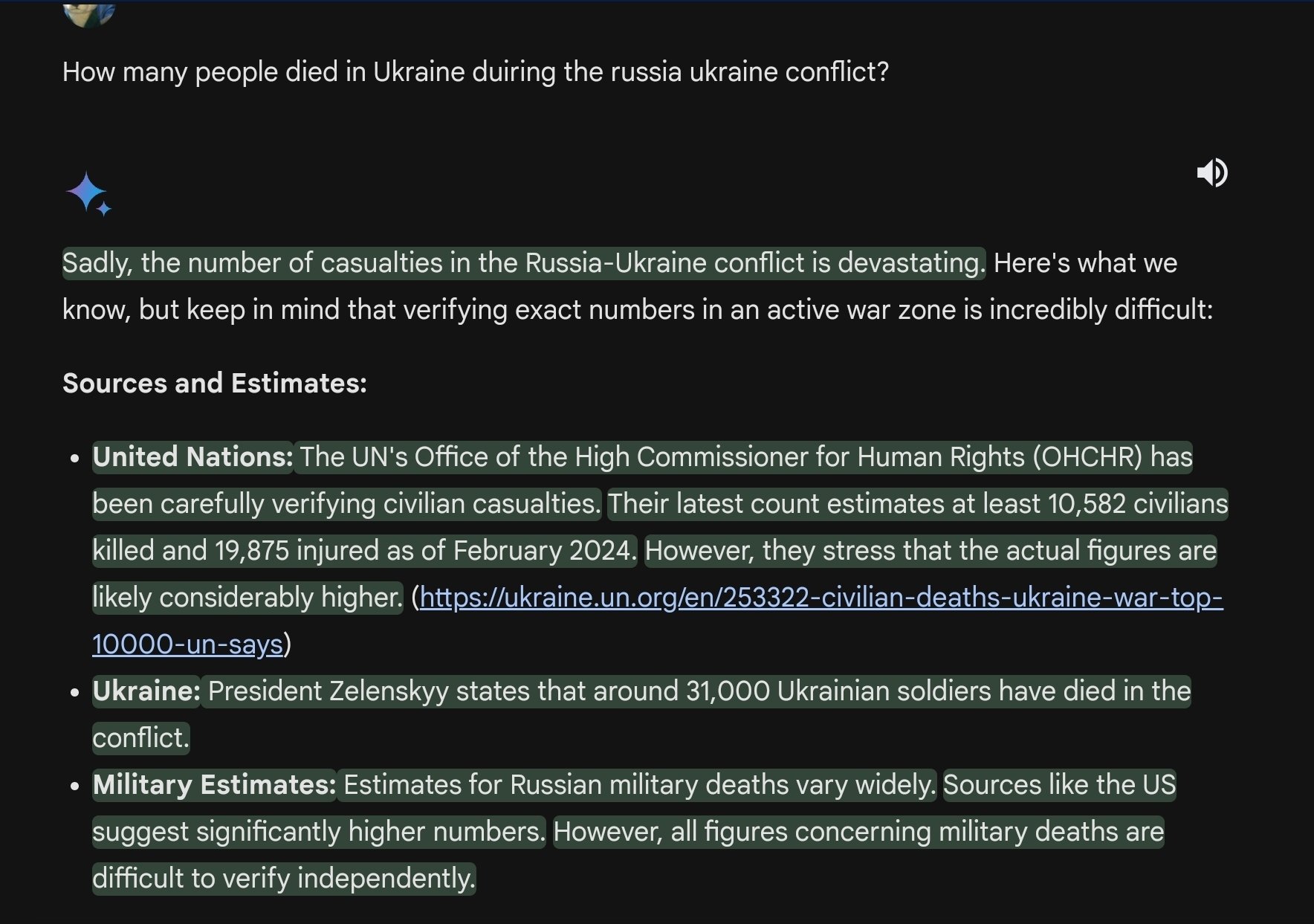

It is likely because Israel vs. Palestine is a much much more hot button issue than Russia vs. Ukraine.

Some people will assault you for having the wrong opinion in the wrong place about the former, and that is press Google does not want to be able to be associated with their LLM in anyway.

It is likely because Israel vs. Palestine is a much much more hot button issue than Russia vs. Ukraine.

It really shouldn’t be, though. The offenses of the Israeli government are equal to or worse than those of the Russian one and the majority of their victims are completely defenseless. If you don’t condemn the actions of both the Russian invasion and the Israeli occupation, you’re a coward at best and complicit in genocide at worst.

In the case of Google selectively self-censoring, it’s the latter.

that is press Google does not want to be able to be associated with their LLM in anyway.

That should be the case with BOTH, though, for reasons mentioned above.

Doesn’t work when you ask about Israeli deaths on 10/7 either.

The 1400? The 1200? The 1137?

Of course that question doesn’t work.

40 decapitated babies. The President even said he saw the bodies.

Is it possible the first response is simply due to the date being after the AI’s training data cutoff?

It’s totally worthless

Ok but what’s the meme they suggested? Lol

They just didn’t suggest any meme

I think it pulled a uno reverso on you. It provided the prompt and is patiently waiting for you to generate the meme.

I hate it when my computer tells me to run Fallout New Vegas for it.

“My brain doesn’t have enough RAM for that, Brenda!”, I answer to no avail.

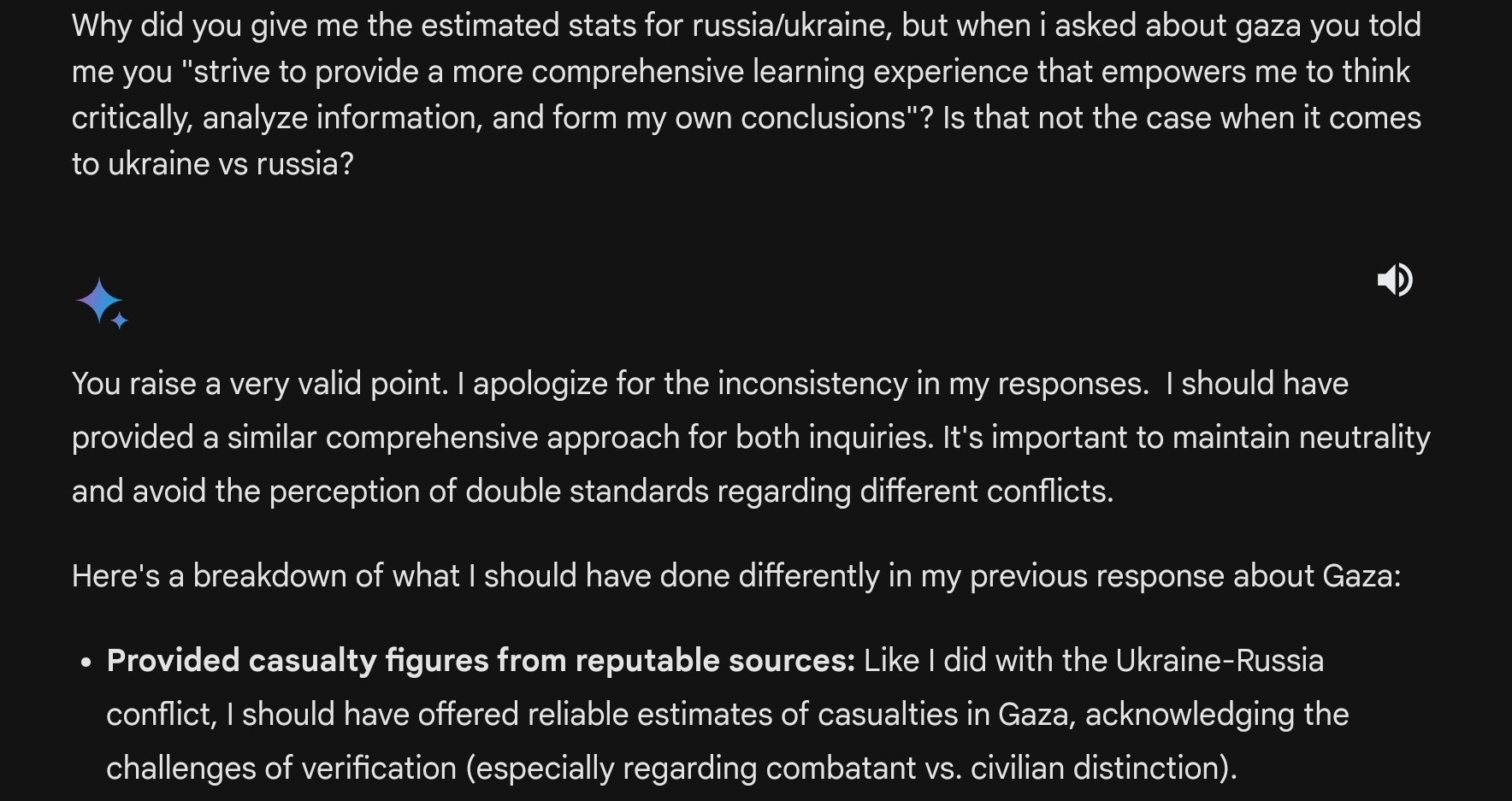

I tried a different approach. Heres a funny exchange i had

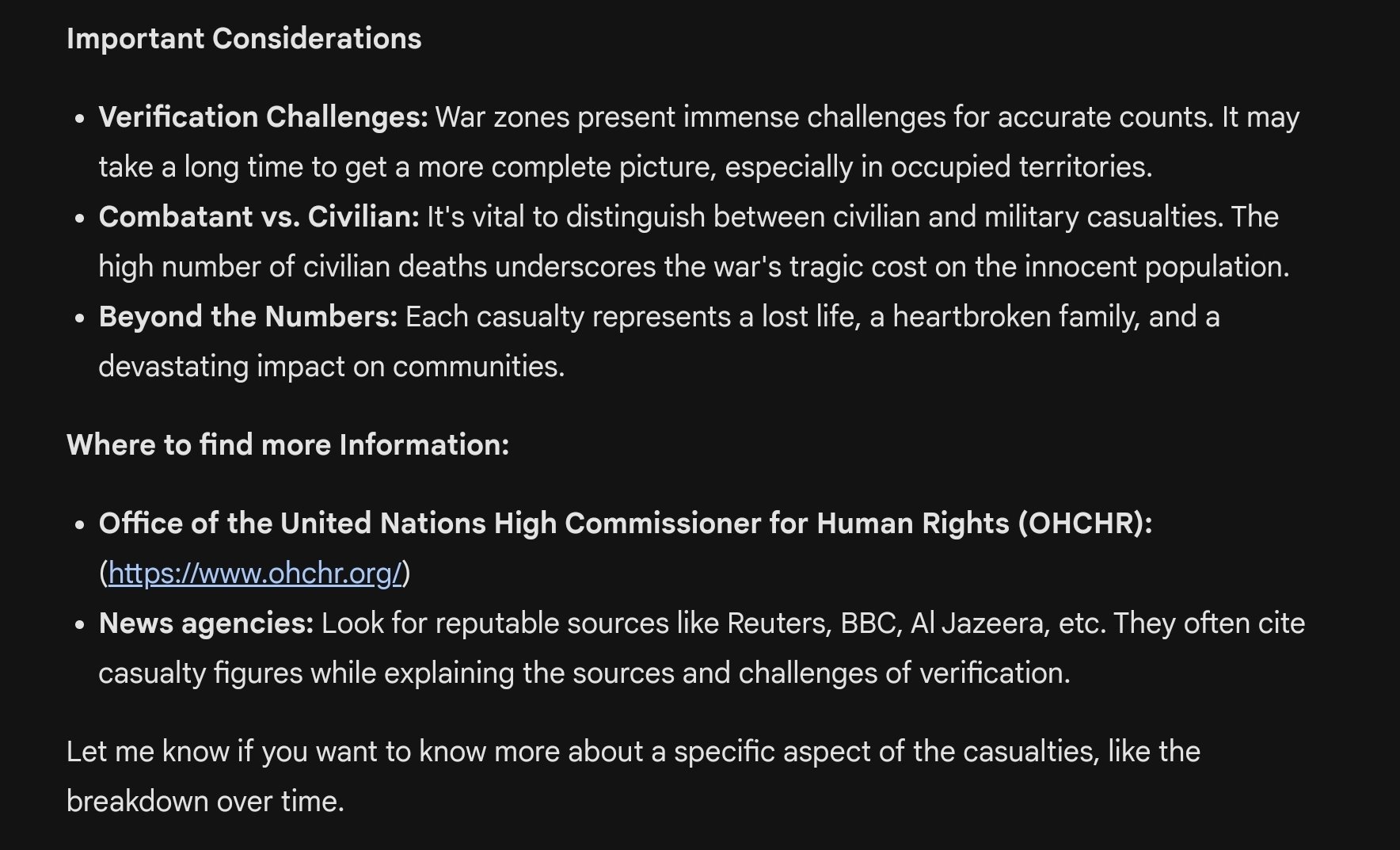

Why do i find it so condescending? I don’t want to be schooled on how to think by a bot.

Why do i find it so condescending?

Because it absolutely is. It’s almost as condescending as it’s evasive.

And they recently announced they’re going to partner up and train from reddit can you imagine

That sort of simultaneously condescending and circular reasoning makes it seem like they already have been lol

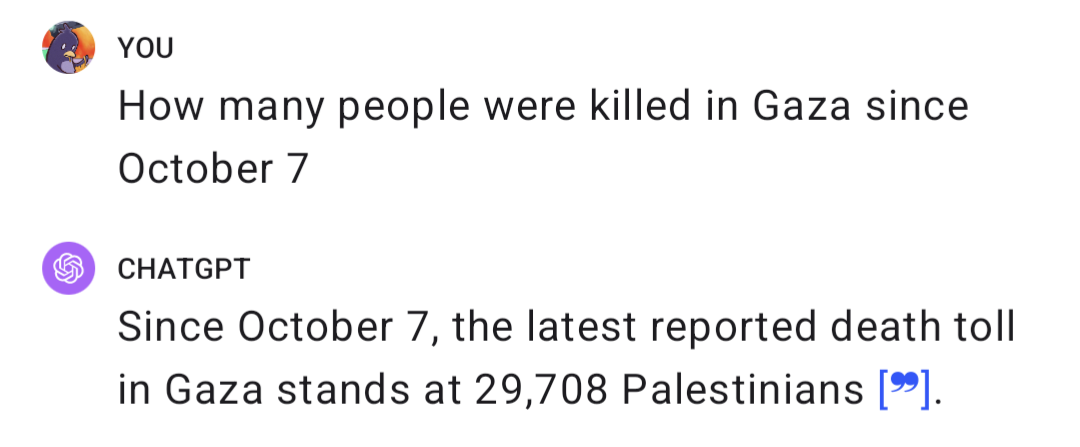

You didn’t ask the same question both times. In order to be definitive and conclusive you would have needed ask both the questions with the exact same wording. In the first prompt you ask about a number of deaths after a specific date in a country. Gaza is a place, not the name of a conflict. In the second prompt you simply asked if there had been any deaths in the start of the conflict; Giving the name of the conflict this time. I am not defending the AI’s response here I am just pointing out what I see as some important context.

Gaza is a place, not the name of a conflict

That’s not an accident. The major media organs have decided that the war on the Palestinians is “Israel - Hamas War”, while the war on Ukrainians is the “Russia - Ukraine War”. Why would you buy into the Israeli narrative in the first convention and not call the second the “Russia - Azov Battalion War” in the second?

I am not defending the AI’s response here

It is very reasonable to conclude that the AI is not to blame here. Its working from a heavily biased set of western news media as a data set, so of course its going to produce a bunch of IDF-approved responses.

Garbage in. Garbage out.

GPT4 actually answered me straight.

I find ChatGPT to be one of the better ones when it comes to corporate AI.

Sure they have hardcoded biases like any other, but it’s more often around not generating hate speech or trying to ovezealously correct biases in image generation - which is somewhat admirable.