Yeah, I’ve lost count of the number of articles or comments going “AI can’t do X” and then immediately testing and seeing that the current models absolutely do X no issue, and then going back and seeing the green ChatGPT icon or a comment about using the free version.

GPT-3.5 is a moron. The state of the art models have come a long way since then.

Thanks for that read. I definitely agree with the author for the most part. I don’t really agree that current LLMs are a form of AGI, but it’s definitely close.

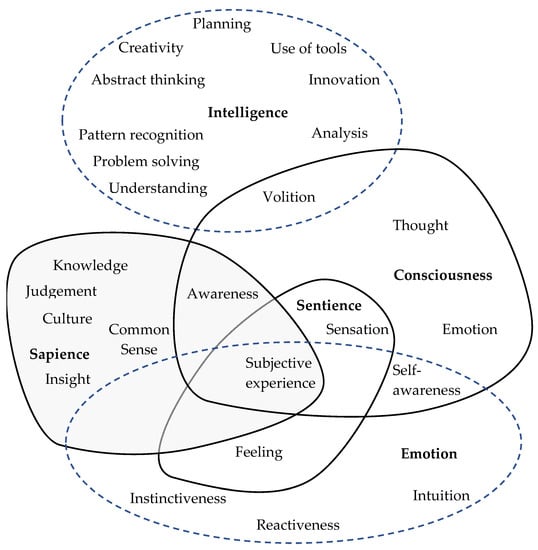

But what isn’t up for debate is the fact that LLMs are 100% AI. There’s no debate there. But I think the reason why people argue that is because they conflate “intelligence” with concepts like sapience, sentience, consciousness, etc.

These people don’t understand that intelligence is a concept that can, and does, exist outside of consciousness.

The problem with ‘AGI’ is that it’s a nonsense term with no agreed upon meaning. I remember in a discussion on Hacker News describing one of Sam Altman’s definitions and being told by someone “no one defines it that way.” It’s a term that means whatever the eye of the beholder finds it convenient to mean.

The article’s point was more that when the term was originally coined it was to distinguish from narrow AI, and according to that original definition and distinction we’re already there (which I definitely agree with).

It’s not saying we’re already at AGI as it’s loosely being used today, where in the comments there’s a handful of better options for that term than AGI, though in spite of it I’m sure we’ll continue to use AGI to the point of meaninglessness as a goal post we’ll never define as met until one day in the far future we claim it’s always been agreed upon as having been met years ago and no one ever doubted it.

And yes, I agree that ‘sentience’ is a red herring discussion point when it comes to LLMs. A cockroach is sentient by the dictionary definition. But a cockroach can’t make similes to Escher drawings in a discussion, which is perhaps the more impressive quality.

Yeah, I’ve lost count of the number of articles or comments going “AI can’t do X” and then immediately testing and seeing that the current models absolutely do X no issue, and then going back and seeing the green ChatGPT icon or a comment about using the free version.

GPT-3.5 is a moron. The state of the art models have come a long way since then.

The most infuriating thing for me is the constant barrage of “LLMs aren’t AI” from people.

These people have no understanding of what they’re talking about.

Edit: to everyone down voting me, look at this image

If you want a refreshing opposite version of that comment perspective, you might enjoy this piece:

https://www.lesswrong.com/posts/gP8tvspKG79RqACTn/modern-transformers-are-agi-and-human-level

Thanks for that read. I definitely agree with the author for the most part. I don’t really agree that current LLMs are a form of AGI, but it’s definitely close.

But what isn’t up for debate is the fact that LLMs are 100% AI. There’s no debate there. But I think the reason why people argue that is because they conflate “intelligence” with concepts like sapience, sentience, consciousness, etc.

These people don’t understand that intelligence is a concept that can, and does, exist outside of consciousness.

The problem with ‘AGI’ is that it’s a nonsense term with no agreed upon meaning. I remember in a discussion on Hacker News describing one of Sam Altman’s definitions and being told by someone “no one defines it that way.” It’s a term that means whatever the eye of the beholder finds it convenient to mean.

The article’s point was more that when the term was originally coined it was to distinguish from narrow AI, and according to that original definition and distinction we’re already there (which I definitely agree with).

It’s not saying we’re already at AGI as it’s loosely being used today, where in the comments there’s a handful of better options for that term than AGI, though in spite of it I’m sure we’ll continue to use AGI to the point of meaninglessness as a goal post we’ll never define as met until one day in the far future we claim it’s always been agreed upon as having been met years ago and no one ever doubted it.

And yes, I agree that ‘sentience’ is a red herring discussion point when it comes to LLMs. A cockroach is sentient by the dictionary definition. But a cockroach can’t make similes to Escher drawings in a discussion, which is perhaps the more impressive quality.

deleted by creator