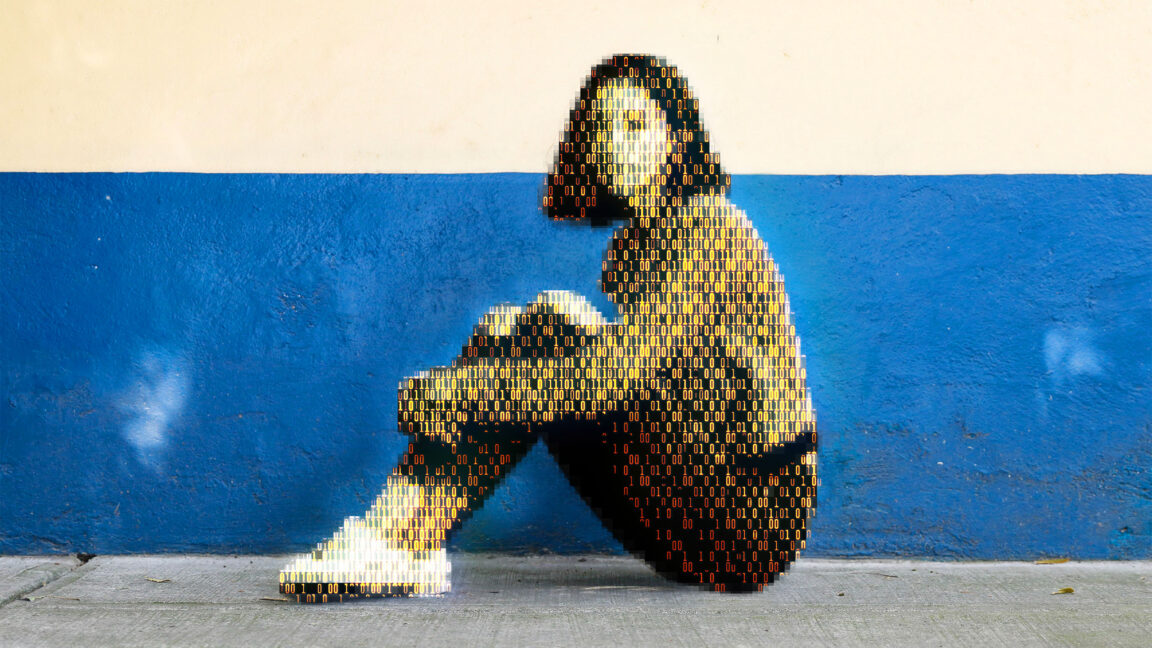

Today, a prominent child safety organization, Thorn, in partnership with a leading cloud-based AI solutions provider, Hive, announced the release of an AI model designed to flag unknown CSAM at upload. It’s the earliest AI technology striving to expose unreported CSAM at scale.

Man… That AI is going to be so fucked up when it gains sentience

Skynet’s real origin story. We might just deserve judgement day.

Oh we definitely do! Definitely for this, and definitely for many other things.

Not a single peep about false positives.

I’m sure it won’t be abused though. And if anyone does complain, just get their electronics seized and checked, because they must be hiding something!

Reminds me of the A cup breasts porn ban in Australia a few years ago, because only pedos would watch that

There was a a porn studio that was prosecuted for creating CSAM. Brazil i belive. Prosecutors claimed that the petite, A-cup woman was clearly underaged. Their star witness was a doctor who testified that such underdeveloped breasts and hips clearly meant she was still going through puberty and couldn’t possible be 18 or older. The porn star showed up to testify that she was in fact over 18 when they shot the film and included all her identification including her birth certificate and passport. She also said something to the effect of women come in all shapes and sizes and a doctor should know better.

I can’t find an article. All I’m getting is GOP trump pedo nominees and brazil laws on porn.

I’m just glad they protected her

Awe man, I love all titties. Variety is the spice of life.

Believe it or not, straight to jail.

If this is the price I must pay, I will pay it, sir! No man should be deprived of privately viewing a consenting adults perfectly formed small tit’s. They can take my liberty, they can take my livelihood, but they will never take away my boner for puffy nipples on a small chested half Japanese woman!

What is the charge? Biting a breast? A succulent Chinese breast?

Not to mention the self image impact such things would have on women with smaller breasts, who (as I understand it) generally already struggle with poor self image due to breast size.

This sort of rhetoric really bothers me. Especially when you consider that there are real adult women with disorders that make them appear prepubescent. Whether that’s appropriate for pornography is a different conversation, but the idea that anyone interested in them is a pedophile is really disgusting. That is a real, human, adult woman and some people say anyone who wants to live them is a monster. Just imagine someone telling you that anyone who wants to love you is a monster and that they’re actually protecting you.

Thorn, the company backed by Ashton Kutcher and which tried to get its way to monitor all messages in the EU via Chat Control. No thanks.

Just remember folks. Kutcher is a slimeball too.

The guy went from a D list star and hanging out with the likes of Danny Masterson and going to Diddy’s infamous parties - to suddenly overnight courting the US government and being the face of ‘helping’ children everywhere.

Yeah right……

People can grow and change. Not saying he did or didn’t. Just saying that people aren’t a monolith. It’s plausible he just grew and his views changed / evolved.

That being said, it’s highly convenient where he’s positioned himself these days…

I’d be wary of calling him guilty by association. Maybe when he realized who he was really hanging out with he was so horrified and disgusted that he just had to get involved and do something to fight back?

It’s awful coincidental that he seems to hang out with the ‘rapist’ crowd. Even going as far as writing a letter for Masterson as to how nice of a guy he is to try to get him a lenient sentence.

Even Hollywood has ostracized him and his wife - news sites recently reported they were looking to leave the country and let things cool off for a while.

I’m sure everyone is right though that keep posting here, that he is a swell guy who was just in the wrong place at the wrong time, multiple times. Several years worth of multiple times with wrong people. Just a coincidence.

The difference between us giving him a benefit of the doubt and claiming innocence and your take, is that you are labeling him a pedophile without proof. That’s a significant claim if false, and imo takes an assumption too far. Maybe he’s bad and it should be looked into, but saying he did something because he was on a show with and good friends with a guy that happened to be a rapist is wrong.

Wasn’t he also featured in a video about how he couldn’t wait until Hillary Duff and the Olsen twins turned 18 because he wanted to date them when they were like 15 ?

I think all CSAM should be destroyed out of respect for the victims, not proliferated. I don’t care who is hanging onto this material or for what purpose.

How is this proliferating csam? Also, how do you expect them to find csam without having known images? It gives a really nice way to check based on hashes without having someone look at every picture on someone’s harddrive. With this AI it should greatly help determining new or unknown images while minimizing the number of actual people that have to see that stuff, and who get scarred from looking at such images. The only reason to be against this is if you are looking at CP and want it to be harder to find, or if you don’t understand how this technology is being used.

How is this proliferating csam?

Sharing it with people and companies that it wasn’t being shared with before.

Also, how do you expect them to find csam without having known images?

The same way it is now: people reporting it and undercover police accounts. People recognise it.

without having someone look at every picture on someone’s harddrive

If it’s going to get used as evidence in court a human will have to review and confirm it. I don’t think “Because the AI said so” is going to convince juries.

The only reason to be against this is if you are looking at CP

Or if it’s you or someone you love who is in the CP. Having further copies of it on further hard drives, whether it’s so someone can bake it into their AI tool or any other purpose is wrong. That’s just my view though.

Sorry I cannot post a longer response but I’d suggest you look up how this type of forensic software is developed and used. There are a few good documentaries on it if you look, one I remember watching was on googles team for this stuff.

The images are not exactly shared in that very few people have access to them, and they treat it very much like classified information so that only select people can see them.

These models would be developed using normal images and then trained in closed systems with the real images where the accuracy is used and not the images. No need to scar the developers who just want to work.

Nothing about the reporting of people will change, the only difference is this will allow the FBI to have a list of suspected CP and a list of normal images from a computer allowing them to spend a fraction of the time looking at this stuff to document it. This is very important when you have people who have literally terrabytes of the stuff and probably even more normal images. In general we like to minimize the time spent looking at such stuff because it is so scarring.

As for showing the images in court, in the US hashes are acceptable evidence, again we don’t like to scar people by showing them this stuff. Additionally after you’ve been shown the 100th picture of a baby being abused and the FBI is telling you they have 1000000 more, you’ll just take their word for it.

Anyways, hope you have a good one

me

no

rikeyJesus Christ. If someone ever got their hands on this model they could use it to generate new material. The grossest possible AI model to date

No. This is an inference model, not a generative model. You generally cannot train a model for both, unless you do it on purpose, and they certainly did not (especially since inference models are way easier to train than generative models).

A generative model uses the classifier as part of its training. If you generate a picture of pure random noise, then iteratively pick random noise that the classifier says “looks” more like csam, then you can effectively generate images that the classifier says it’s 100% certain is csam. Whether or not that looks anything like what a human would consider to be csam depends on other factors but it remains a possibility.

You are describing the way deepdream works, not the way modern Diffusion models work. It’s the difference between psychedelic dog faces and a highly adherent generative image of a German Sheppard.

I can’t imagine you’re going to get anything out of this model that actually looks like CSAM, unless there’s some sort of breakthrough in using these models for previously unrealized generative purposes.

I thought being able to do that was already a thing. This is designed to do the opposite.

I know, I know… bad actors and such.

…but if simple posession defines who a bad actor is…

The irony of this never ceases to amaze me.

It’s the earliest AI technology striving to expose unreported CSAM at scale.

horde-safety has been out for a year now. Just saying… It’s not a trained AI model in this way, but it’s still using Neural Networks (i.e. “AI Technology”)

This is a great development, albeit with a lot of soul crushing development behind it I assume. People who have to look at CSAM or whatever the acronym is have a miserable job, so I’m very supportive of trying to automate that away from people.

Yeah, I’m happy for AI to take this particular horrifying job from us. Chances are it will be overtuned (too strict), but if there’s a reasonable appeals process I could see it saving a lot of people the trauma of having to regularly view the worst humanity has to offer without major drawbacks.

… robo chocolate?

This seems like a potential actual good use of AI. Can’t have been much fun to train it though.

And is there any risk of people turning these kinds of models around and using them to generate images?

If AI was reliable, maybe. MAYBE. But guess what? It turns out that “advanced autocomplete” does a shitty job of most things, and I bet false positives will be numerous.

“detect new or previously unreported CSAM and child sexual exploitation behavior (CSE), generating a risk score to make human decisions easier and faster.”

False positives don’t matter if they stick to the stated intended purpose of making it easier to detect CSAM manually.

The problem is that they won’t.

Yes, AI tools, in the hands of skilled people, can be very helpful.

But “AI” in capitalism doesn’t mean “more effective workers”, it means “fewer workers.” The issue isn’t technological so much as cultural. You fundamentally cannot convince an MBA not to try to automate away jobs.

(It’s not even a money thing; it’s about getting rid of all those pesky “workers rights” that workers like to bring with us)