When Adobe Inc. released its Firefly image-generating software last year, the company said the artificial intelligence model was trained mainly on Adobe Stock, its database of hundreds of millions of licensed images. Firefly, Adobe said, was a “commercially safe” alternative to competitors like Midjourney, which learned by scraping pictures from across the internet.

But behind the scenes, Adobe also was relying in part on AI-generated content to train Firefly, including from those same AI rivals. In numerous presentations and public postsabout how Firefly is safer than the competition due to its training data, Adobe never made clear that its model actually used images from some of these same competitors.

Oh hey, look. The cycle of AI ingesting garbage output from another AI model has begun. This can’t possibly impact quality or reliability in any way /s

Time to save the models we have now, cause they’ll never the quite the same.

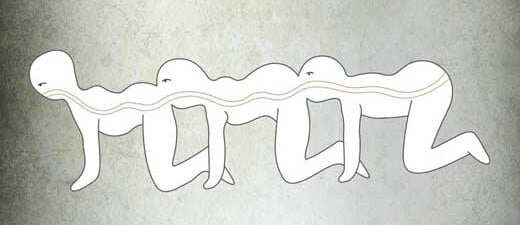

The AI centipede era has begun

AI ingesting the output of AI ingesting the output of AI…

deleted by creator

I said it around 2 years ago when the term “ethical” was first coined by media when talking about AI. Ehtical in this context just means those who own data centers and made a huge efford to extract and process user data (Facebook, Google, Amazon, etc.) have all the cards. Nevermind the technology being so new users couldn’t possibly consent to it years ago. They just update their TOS and get that consent retroactively while law makers are absent as they happily watch their strocks go up.

Its really frustrating to see people get riled up and manipulated into thinking legislating to make illegal anything “unethical” is in their interest.

Its a fantasy to think individual creators will get a slice of the pie and not just the data brokers. Its also a convenient way to destroy the competition.

People are getting emotional and they are going to use that to build one of the grossest monopoly ever seen.

Adobe said a relatively small amount — about 5% — of the images used to train its AI tool was generated by other AI platforms. “Every image submitted to Adobe Stock, including a very small subset of images generated with AI, goes through a rigorous moderation process to ensure it does not include IP, trademarks, recognizable characters or logos, or reference artists’ names,” a company spokesperson said.

Adobe Stock’s library has boomed since it began formally accepting AI content in late 2022. Today, there are about 57 million images, or about 14% of the total, tagged as AI-generated images. Artists who submit AI images must specify that the work was created using the technology, though they don’t need to say which tool they used. To feed its AI training set, Adobe has also offered to pay for contributors to submit a mass amount of photos for AI training — such as images of bananas or flags.

AIuroboros

the problem is “intellectual property” existing at all, just get rid of it entirely and make everything public domain

AI daisy chain. One AI output is another AI input.

I’ve seen Multiplicity enough times to know how this turns out.

You’ve been watching the original movie multiple times? I just watch the most recent recording of myself describing the movie, and then record a new description over that, with each successive generation.