- cross-posted to:

- aicompanions@lemmy.world

- cross-posted to:

- aicompanions@lemmy.world

My friend is a technical writer and just lost her job because “chat GPT can do what you do!”

She then informed me that she knew 11 other people who got fired due to the same thing. And now those companies are realizing how badly they fucked up and are frantically trying to rehire.

That’s like firing an accountant because Excel can do what they do. Lol

My experience tells me that gpt is only good if a trained professional is behind the screen. If you fire a technician or a professional and fully replace it with GPT, it’ll be on you to see how much it backfires

Replacing humans with AI is a bit like replacing a trained professional with a minimum wage, call center worker from a third world country. Sure it saves money and they can kind of do the job well enough so that if you squint it looks like the same thing. But the output is inevitably going to be subpar unless you retain a human expert manager.

Anyone who has ever had to deal with code from India knows this all too well.

I hope she says no.

I don’t think OpenAI should be offering ChatGPT 3.5 at all except via the API for niche uses where quality doesn’t matter.

For human interaction, GPT 4 should be the minimum.

4 is worse today than it was a year ago.

As intended. LLMs are either good or are easy to control and censor/direct what they answer. You can’t have both. Unlike a human with actual intelligence who can self censor or intelligently evade or circunvent compromising answers. LLMs can’t do that because they’re not actually intelligent. A product has to be controllable by its client, so, to control it, you have to lobotomize it.

They do seem capable of some level of self-censorship but the bigger issue is just fundamentally how they’re programmed. The current models have to use the context window to essentially think. That’s why prompts like “explain step by step” help so much, the AI can use its own response window to do some of the thought processing.

It’s like if you didn’t have the ability to have internal thoughts and had to say everything you were thinking out loud in order to be able to think about it. Inevitably you’re going to say inappropriate things because in order to get to the appropriate thing you have to be able to think about the inappropriate thing first. But if all you can do is type what you think then you’re stuck.

AI companies are well aware of this problem and are fixing it but a lot of the currently available models are still based on the old philosophy.

You have inadvertently made an excellent argument for freedom of / unregulated speech online and in other spaces.

I know however that in practice people think the bad thing, say it and then find a million voices to echo it and instead of learning they become radicalised.

But your post outlines the idealistic view.

Neither are that good. Both need a ton of human oversight. Preferably from a humam who knows the sorce material fed to the machine.

Yeah, I’ve lost count of the number of articles or comments going “AI can’t do X” and then immediately testing and seeing that the current models absolutely do X no issue, and then going back and seeing the green ChatGPT icon or a comment about using the free version.

GPT-3.5 is a moron. The state of the art models have come a long way since then.

The most infuriating thing for me is the constant barrage of “LLMs aren’t AI” from people.

These people have no understanding of what they’re talking about.

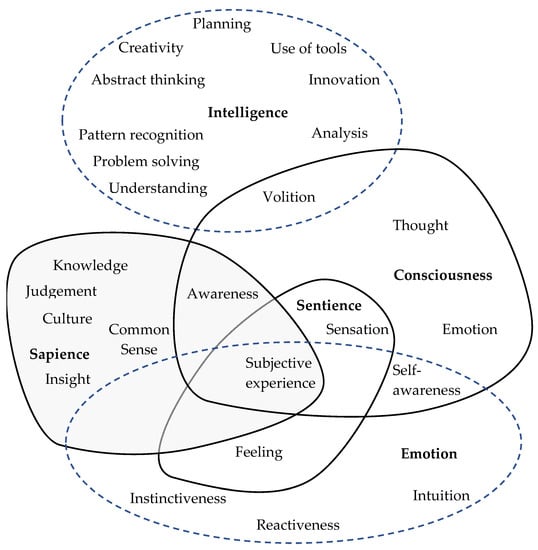

Edit: to everyone down voting me, look at this image

If you want a refreshing opposite version of that comment perspective, you might enjoy this piece:

https://www.lesswrong.com/posts/gP8tvspKG79RqACTn/modern-transformers-are-agi-and-human-level

Thanks for that read. I definitely agree with the author for the most part. I don’t really agree that current LLMs are a form of AGI, but it’s definitely close.

But what isn’t up for debate is the fact that LLMs are 100% AI. There’s no debate there. But I think the reason why people argue that is because they conflate “intelligence” with concepts like sapience, sentience, consciousness, etc.

These people don’t understand that intelligence is a concept that can, and does, exist outside of consciousness.

The problem with ‘AGI’ is that it’s a nonsense term with no agreed upon meaning. I remember in a discussion on Hacker News describing one of Sam Altman’s definitions and being told by someone “no one defines it that way.” It’s a term that means whatever the eye of the beholder finds it convenient to mean.

The article’s point was more that when the term was originally coined it was to distinguish from narrow AI, and according to that original definition and distinction we’re already there (which I definitely agree with).

It’s not saying we’re already at AGI as it’s loosely being used today, where in the comments there’s a handful of better options for that term than AGI, though in spite of it I’m sure we’ll continue to use AGI to the point of meaninglessness as a goal post we’ll never define as met until one day in the far future we claim it’s always been agreed upon as having been met years ago and no one ever doubted it.

And yes, I agree that ‘sentience’ is a red herring discussion point when it comes to LLMs. A cockroach is sentient by the dictionary definition. But a cockroach can’t make similes to Escher drawings in a discussion, which is perhaps the more impressive quality.

deleted by creator

CNET can generate more articles for free